AI search visibility metrics and KPIs have become the most urgent measurement challenge in B2B marketing right now, because the old dashboards are lying to you. Rankings, impressions, and click-through rates were built for a world where users clicked links. That world is shrinking fast.

Why Traditional Metrics Are the Wrong Lens for AI Search Visibility

Here is the core problem most marketing teams are ignoring. AI search creates zero-click experiences where users get synthesized answers directly inside the interface, and your brand either appears in that answer or it does not exist in that moment.

Ranking #1 does not guarantee AI inclusion. AI systems select sources dynamically, vary their responses across sessions, and synthesize multiple entities into a single answer. Traditional reporting misses citations entirely.

The visibility gap between your traditional dashboard and your actual AI presence is where pipeline is leaking right now. Most content was never built for AI visibility, and most measurement systems were never built to catch the difference.

This is not an SEO problem. It is a discovery problem. And it requires an entirely new measurement layer.

What AI Search Visibility Metrics Actually Measure

AI Search Visibility Metrics and KPIs measure how frequently, prominently, and accurately your brand appears inside AI-generated answers across platforms like ChatGPT, Perplexity, Gemini, Claude, and Google AI Overviews.

These metrics capture inclusion, authority, prominence, and discoverability. They do not replace business KPIs. They sit underneath them as the early-warning system for pipeline influence.

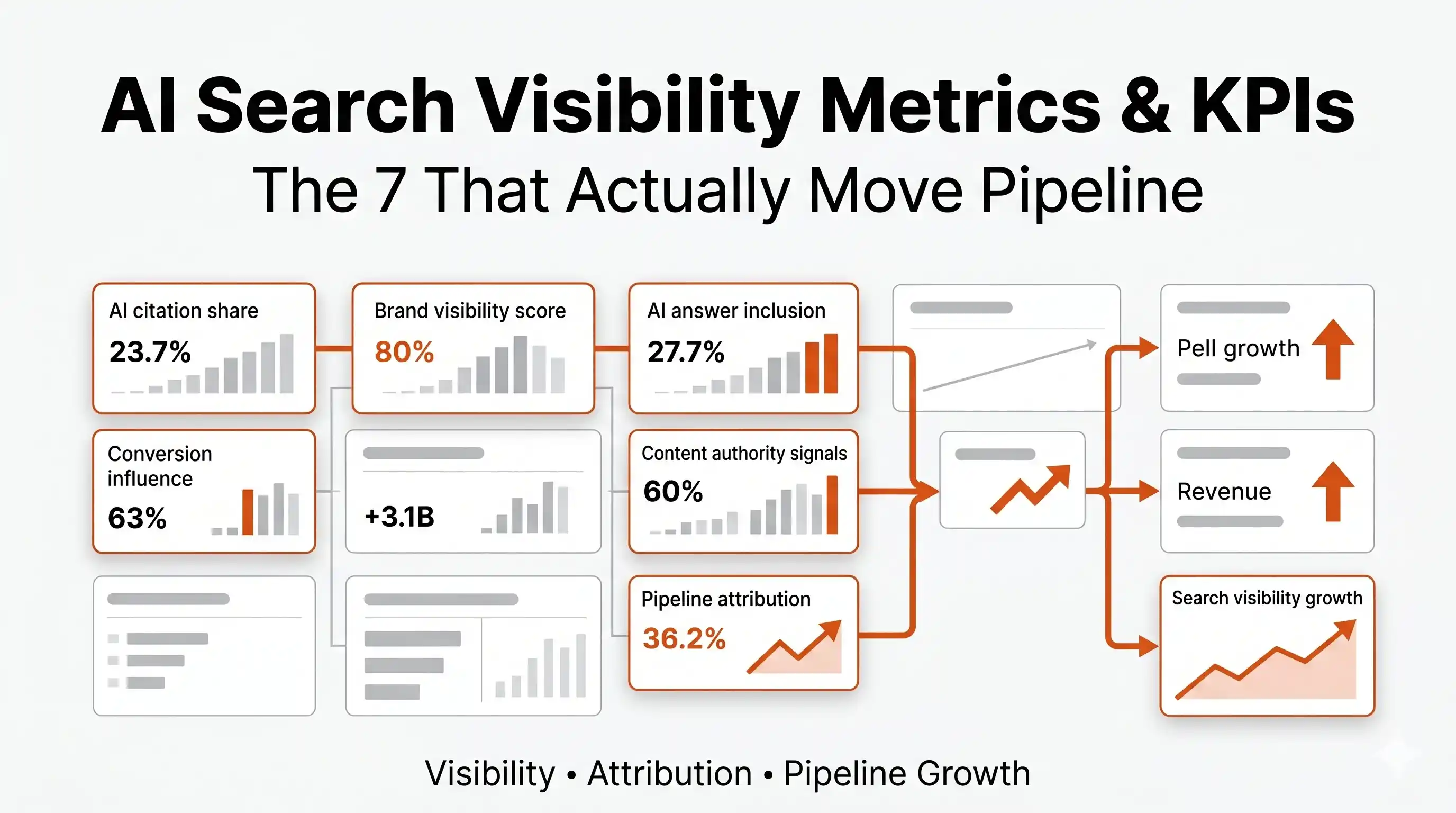

A quick visual guide to AI Search Visibility Metrics & KPIs. Learn the five KPIs that reveal how your content performs in AI-driven search and where to improve.

Think of it this way: SEO measured rankings. AI visibility measures whether your brand becomes part of the answer. Those are fundamentally different things, and conflating them is costing brands real pipeline.

The 7 AI Search Visibility Metrics & KPIs That Actually Matter

Most teams are either tracking vanity metrics or applying outdated frameworks. Here are the seven AI visibility KPIs that give you a real picture of competitive position and pipeline influence.

1. Citation Share (The #1 AI Visibility KPI)

Citation Share is the percentage of AI citations your brand owns compared to every competitor in your category. It is the single most important metric in any AI visibility measurement framework.

Raw citation counts mislead you because different AI engines cite differently, at different frequencies, across different query types. Citation share normalizes that noise into a number you can actually benchmark and act on.

Formula: (Your Brand Citations / Total Category Citations) x 100

A brand with 400 citations in a category generating 2,000 total citations holds a 20% citation share. That number tells you far more than "we got mentioned 400 times." It tells you where you stand in the competitive conversation happening inside AI answers right now.

im1

2. AI Share of Voice (AISoV)

AI Share of Voice tracks how often your brand appears relative to competitors across prompts, platforms, and query clusters. This is the AI-era version of market visibility, and it belongs on every executive dashboard in 2026.

AISoV gives you the panoramic view. Citation share tells you how you rank in the citation count. AISoV tells you how present your brand is in the conversations buyers are having with AI before they ever reach a sales team.

If you are not in the AI answer, you are not in the deal. AISoV is the metric that confirms whether that statement applies to your brand right now.

3. Prompt Coverage

Prompt coverage is the percentage of tracked prompts where your brand appears. It measures topical reach, conversational breadth, and category relevance across the full spectrum of buyer-stage queries.

A brand with high citation share on one narrow query cluster but zero coverage on comparison and solution-seeking prompts has a structural visibility gap. Prompt coverage surfaces that gap before competitors exploit it.

Coverage should be mapped against buyer journey stages: informational, commercial, comparison, and solution-seeking prompts. Know exactly what your buyers are asking AI engines, across every ICP, persona, and market. That is the only way prompt coverage becomes a strategic instrument rather than a vanity number.

4. Citation Prevalence

Citation prevalence is not about dominance. It is about consistency. The core question it answers is simple: when relevant prompts are asked, do you appear at all?

This metric catches the brands that show up brilliantly in a few optimized queries but go dark everywhere else. Consistent prevalence across your full prompt universe is the foundation that makes every other AI visibility KPI meaningful.

Low prevalence is often a content structure problem, not a content quality problem. Auditing and optimizing your existing content for citation-friendly structure, including FAQ schemas and entity clarity, is usually the fastest path to improving prevalence scores.

5. Brand Mention Prominence

Being cited matters. Being cited first, and being cited as the recommended option, matters significantly more. Prominence scoring captures the difference between appearing as a weak supporting mention and being positioned as the top choice in an AI answer.

A practical prominence scoring framework:

|

Score |

Meaning |

Pipeline Impact |

|

3 |

Recommended / top choice in answer |

Highest influence on buyer consideration |

|

2 |

Mentioned positively as relevant option |

Moderate influence, builds familiarity |

|

1 |

Weak or peripheral mention |

Minimal pipeline impact |

Tracking average prominence scores across your prompt universe reveals whether your AI citations are actually influencing buyer decisions or simply registering as background noise.

6. Sentiment and Accuracy

This is the most under-covered KPI in every AI visibility framework being published right now. AI visibility without accuracy and positioning control can actively damage your brand.

AI engines hallucinate. They pull outdated descriptions. They conflate your positioning with a competitor's. They repeat a claim you corrected two years ago. None of that shows up in citation share or prompt coverage numbers.

Track all of the following as part of your sentiment and accuracy layer:

- Incorrect product or service descriptions appearing in AI answers

- Outdated positioning or discontinued claims being cited

- Competitor confusion, where AI conflates your brand with a rival

- Hallucinated features, pricing, or capabilities

- Negative sentiment in how your brand is characterized

Visibility without accuracy is not an asset. It is a liability. This metric protects the value of every other KPI in your AI visibility stack.

7. AI Referral and Assisted Impact

AI search often produces invisible influence. A buyer researches your category in Perplexity, finds your brand in the answer, and three days later types your name directly into Google. That conversion path shows zero AI attribution in your standard analytics.

Visibility without revenue thinking is a vanity metric. The goal of tracking AI referral and assisted impact is to connect your AI visibility KPIs to pipeline outcomes: branded search lift, assisted conversions, demand generation impact, and influenced pipeline value.

Not all citations drive pipeline. That is the critical insight most AI visibility frameworks miss. A citation in a low-intent informational answer has a different revenue value than a citation in a high-intent comparison query. Measuring assisted impact forces you to make that distinction.

The AI Visibility Measurement Stack: How the 7 KPIs Work Together

These seven AI search visibility metrics and KPIs do not operate in isolation. They form a three-layer measurement stack.

|

Layer |

Metrics |

What It Tells You |

|

Visibility Layer |

Citation Share, Prompt Coverage, Citation Prevalence |

Are you in the answer? How broadly? How consistently? |

|

Authority Layer |

AISoV, Brand Mention Prominence, Sentiment & Accuracy |

Are you winning the answer? Are you winning it accurately? |

|

Business Impact Layer |

AI Referral & Assisted Impact |

Is your AI visibility converting to pipeline? |

The mistake most teams make is measuring only the visibility layer and calling it done. That is how you end up with a citation share chart that looks great and a pipeline number that does not move.

The Biggest Mistake in AI Visibility Measurement

Most teams treat AI search like traditional search. They run a single query, note whether they appeared, and report it as a fact. That is not measurement. That is guessing with extra steps.

AI systems are probabilistic, dynamic, and conversational. The same query run ten times in a single session can produce materially different answers. Single-point measurements are directional at best and misleading at worst.

AI visibility should be measured as distributions and trends, not single-point rankings. That means repeated sampling across sessions, time-based trend analysis, and visibility distributions that show you the range of outcomes, not just one snapshot.

AI visibility scores are directional signals, not perfect truth. Teams that build their reporting around that nuance will make better decisions than teams chasing a single number on a monthly dashboard.

Why AI Search Visibility Metrics Are Genuinely Hard to Measure

This is not a tooling problem you can solve by buying a bigger platform. The measurement challenges are structural.

Acknowledging these constraints is not weakness. It is what separates strategic teams from teams that will confidently report AI visibility numbers that mean nothing.

How to Build an AI Visibility Measurement Framework That Works

The operational framework is not complicated. What makes it work is discipline and the right intelligence layer underneath it.

Step 1: Define Strategic Prompt Clusters. Group prompts by buyer intent stage: informational, commercial, comparison, and solution-seeking. Map them to ICP segments and persona types. If you are measuring prompts that do not match real buyer behavior, every metric downstream is built on a false foundation.

Step 2: Track Across Multiple AI Engines. ChatGPT, Perplexity, Gemini, Claude, and Google AI Overviews all behave differently. A brand cited prominently in Perplexity may be invisible in Google AI Mode. Cross-engine measurement is not optional if you want an accurate picture of AI share of voice.

Step 3: Measure Repeatedly. One query does not equal a reliable measurement. You need repeated sampling across sessions and time windows to produce visibility distributions that reflect actual patterns rather than single-session noise.

Step 4: Benchmark Competitors Continuously. Track citation gaps, whitespace where competitors are not yet being cited, and comparative visibility shifts. Find every citation gap before a competitor claims it. That is the only defensible competitive posture in AI search right now.

Step 5: Connect Visibility to Business Outcomes. Map citation trends to branded search lift. Track influenced pipeline. Identify assisted conversions. If your AI visibility metrics do not have a path to pipeline, they are reporting metrics, not strategic intelligence.

How Omnibound Delivers AI Search Visibility Metrics & KPIs as Strategic Intelligence

Most tools report visibility. Omnibound provides the strategic intelligence and execution layer that turns measurement into action.

Omnibound is not a rank tracker rebuilt for AI search. It is an AI visibility intelligence platform that helps teams operate with precision across every dimension of AI discoverability.

Here is what Omnibound gives your team:

Omnibound transforms real buyer language, objections, and decision triggers into structured, AI-aligned content. That content performs in the visibility metrics that matter because it was built from buyer intelligence, not generic prompts that produce slop content.

The next era of marketing will be defined by intelligent action, not just endless data. Omnibound closes that gap by giving teams not just the measurement layer but the execution capability to act on what the metrics reveal.

Conclusion

The seven KPIs in this framework, Citation Share, AI Share of Voice, Prompt Coverage, Citation Prevalence, Brand Mention Prominence, Sentiment and Accuracy, and AI Referral and Assisted Impact, give you a complete picture of where your brand stands in the conversations buyers are having right now.

Measure them as distributions. Benchmark them against competitors. Connect them to pipeline. And use them to drive the content and optimization decisions that actually improve your position in AI-generated answers across every engine that matters. That is what it means to operate with strategic vision in AI search marketing in 2026.

FAQs

What are AI Search Visibility Metrics and why do they matter in 2026?

AI Search Visibility Metrics measure how frequently, prominently, and accurately your brand appears inside AI-generated answers on platforms like ChatGPT, Perplexity, and Gemini. They matter in 2026 because AI search is now influencing B2B buying decisions before buyers ever reach a sales team, making citation presence a direct pipeline factor.

How do you measure AI search visibility effectively?

Effective AI search visibility measurement requires defining strategic prompt clusters mapped to buyer intent stages, tracking citations across multiple AI engines simultaneously, and sampling repeatedly to build visibility distributions rather than relying on single-point snapshots. Single measurements are unreliable because AI outputs are probabilistic and change across sessions.

What is AI Share of Voice and how is it different from regular share of voice?

AI Share of Voice (AISoV) measures how often your brand appears relative to competitors across AI-generated answers, prompts, and query clusters. Unlike traditional share of voice, which measures media or ad presence, AISoV captures conversational discoverability inside AI answers where buyers are actively researching decisions.

What is citation share in AI search and how do you calculate it?

Citation share is the percentage of AI citations your brand owns compared to all competitors in your category, calculated as (Your Brand Citations / Total Category Citations) x 100. It is the most important single AI visibility KPI because it normalizes raw citation counts across engines and gives you a true competitive benchmark.

Why are traditional analytics insufficient for measuring AI search visibility?

Traditional analytics track clicks, impressions, and rankings, but AI search creates zero-click experiences where answers are delivered directly to users without traffic events. AI engines also produce dark attribution where a buyer researches your brand in AI search and converts days later with no AI source visible in your analytics.

Are AI visibility scores reliable enough to build strategy around?

AI visibility scores are directional signals, not perfect truth. Because AI outputs are dynamic and vary across sessions, individual scores carry uncertainty. Reliable strategic use requires repeated sampling, trend analysis over time, and treating the data as distributions rather than fixed rankings.

What tools measure AI visibility and citation tracking for B2B brands?

Dedicated AI visibility intelligence platforms like Omnibound track AI citations across ChatGPT, Perplexity, Gemini, Claude, and Google AI Overviews, monitor conversational presence, identify competitor citation gaps, and connect AI visibility KPIs to pipeline outcomes. Traditional analytics platforms were not built for this measurement layer and cannot provide accurate AI citation data.

Turn Your Content Into AI-Search Winners

Get cited across ChatGPT, Claude & Perplexity — not just ranked on Google.

- Increase AI citations

- Improve answer visibility

- Track brand mentions in LLMs